I replaced the thermal paste on the CPU and GPU in the previous writeup located here. So what were the results…?

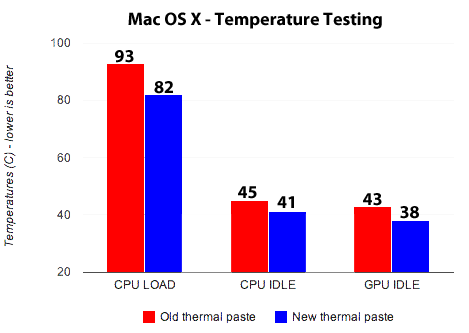

First up, some numbers from Mac OS X.

Load temps are running the Prime 95 stress test for the Mac. Other numbers are in Mac OS X at idle. Values were pulled from iStat Pro.

The load temps are after a few minutes at load in Prime95 (Mac OS X). Initially, they went up quite high (possibly to 100˚, although iStat wouldn't update quickly enough to verify the peak temps). These were the numbers they settled at. It's worth noting that I also ran tests in Windows (OCCT/Linpack), and both times, 100 degrees was reached, although with the new thermal paste, only 1 core made it to 100, and did so more slowly (whereas with the old paste, 2-3 would make it to 100 each time, and much more quickly). In both cases, the temperature would eventually drop to around 90˚ once the fans eventually sped up to the point they were going at full tilt.

Idle temps don't show as significant a difference, but there were gains to be had.

Update: Despite the claims that the Noctua paste doesn't need time to cure, a couple days later, I re-ran OCCT and found that the temperature rose only to 97˚ (instead of hitting 100˚ and causing the thermal protection to trip). A definite improvement.

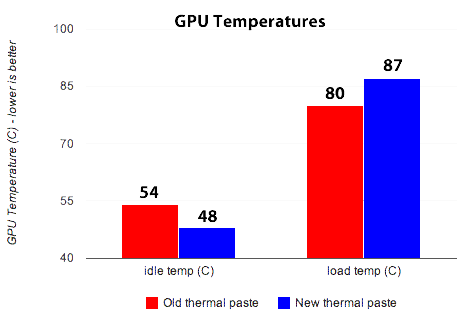

Now it's time for the surpise… Next up, the GPU in Windows testing. I ran Furmark for this one to work the bananas out of the GPU.

Wowz. With the new paste, there are lower idle temps, but higher load temps (surprise!). I was shocked until I spent a few moments thinking about it.

Want to know how it's possible? I'll tell you.

The old thermal paste was so thick that it insulated the CPU and GPU cores to an extent. The heatsink always stayed a little cooler than the cores, because there was poor thermal transfer. This (arguably) worked out well for the GPU, as it didn't have to eat the high temps from the CPU core.

With the new paste, both the CPU and GPU have excellent contact with the heatsink. In fact, it's so good that they're essentially “linked” when it comes to temperature.

Furmark (despite largely being a GPU test) actually cranked up the temperature of the CPU as well, to high heat & load levels. This heat was transferred to the GPU through the MacBook Pro's shared heatsink.

-

Sound like a bunch of crazy? Here's a little bit of data to back up that claim:

Since the images are a bit small, you can click on them to see the larger view. It was actually pretty cool to watch (once I realized what was happening and started watching both temps at the same time & taking screenshots).

During cooldown, the temperatures decreased in lock-step. At the higher temps (70+), they'd be exactly the same. One would drop 1 degree, then the other would quickly follow.

As temps got lower, the temperature difference between them would grow… 2 degrees difference, 3 degrees difference, and eventually they'd split apart, getting to their individual idle readings once the temperatures were low enough (although it took a very long time to drop back down that low).

-

Conclusion

Re-applying the thermal paste worked quite well. GPU and CPU idle temps dropped rather significantly on the MacBook Pro.

The CPU still hit the thermal limit (verified in Windows), but it rose more slowly and came very close to capping at 99 with the new paste. I'm pleased there was some improvement here, but not thrilled. I know what you're thinking… “you dropped your load temps by 11 degrees though! You should be ecstatic!“. True. That initial move to 100˚ is worrisome though. I was really hoping it'd be avoided.

(Update: In case you missed it above, a couple days later, the temps only reached 97˚. It looks like the Noctua paste needed a bit of curing time after all.)

That said, few applications push a CPU to the temps that OCCT/Furmark do. It's possible that I'll never actually hit 100 degrees again in normal usage.

The largest downside to the thermal paste re-application appeared to be the GPU's exposure to the CPU's temps. I wasn't monitoring GPU temps during OCCT/Linpack, but based on the above observations with Furmark, I'd imagine they probably rose quite a bit. I'm not sure whether the high temp is harmful to the GPU when it isn't actually doing anything, but I think it's safe to say that a high temp on 1 chip is going to cause a high temp on the other.

< Back to Main